In order to explore how AWS user organizations are fairing in the cloud, what suite of services, tools and best practices IT leaders prioritize and how they respond to growing security threats and compliance requirements, N2WS has published its 2020 AWS Cloud Data Protection Report using data collected by surveying some of the attendees who came by our booth recently at AWS re:Invent 2019.

The response was enthusiastic as over 130 attendees answered 10 short questions, in return for this bottle cap shooter gift. It was certainly epic fun firing the caps at our colleagues but admittedly maybe launching them in a room full of thousands of event goers was not the best idea we ever had (?).

As a year has passed since our last AWS Cloud Data Protection Report, we have been able to build on these two consecutive years of data and provide insights into cloud utilization trends and see how our past predictions worked out. We were able to gather even more valuable information this year about these IT professionals and how they use AWS as they migrate and operate their servers using AWS’ services. It was obvious given the sheer number of people at the conference and its exponential growth each year (now up to 60K+!) that there are many benefits to having your production data on the cloud. However, we also saw pain points and even some concerning neglect in data management that prompted us to publish the results.

Here are the questions we asked:

- How are you currently protecting your AWS workloads?

- Which type of databases are you currently working with on AWS?

- How many EC2 instances do you have?

- How many AWS accounts do you have?

- How long do you retain backups in AWS?

- Where do you store backups for long term retention?

- Describe your current Disaster Recovery plan.

- How often do you carry out recovery drills?

- What best describes your process for recovering an application after an outage?

- Lastly, which of the following best describes your role?

Here are our takeaways:

It was alarming to see how many AWS survey respondents were still not automating data backup and recovery

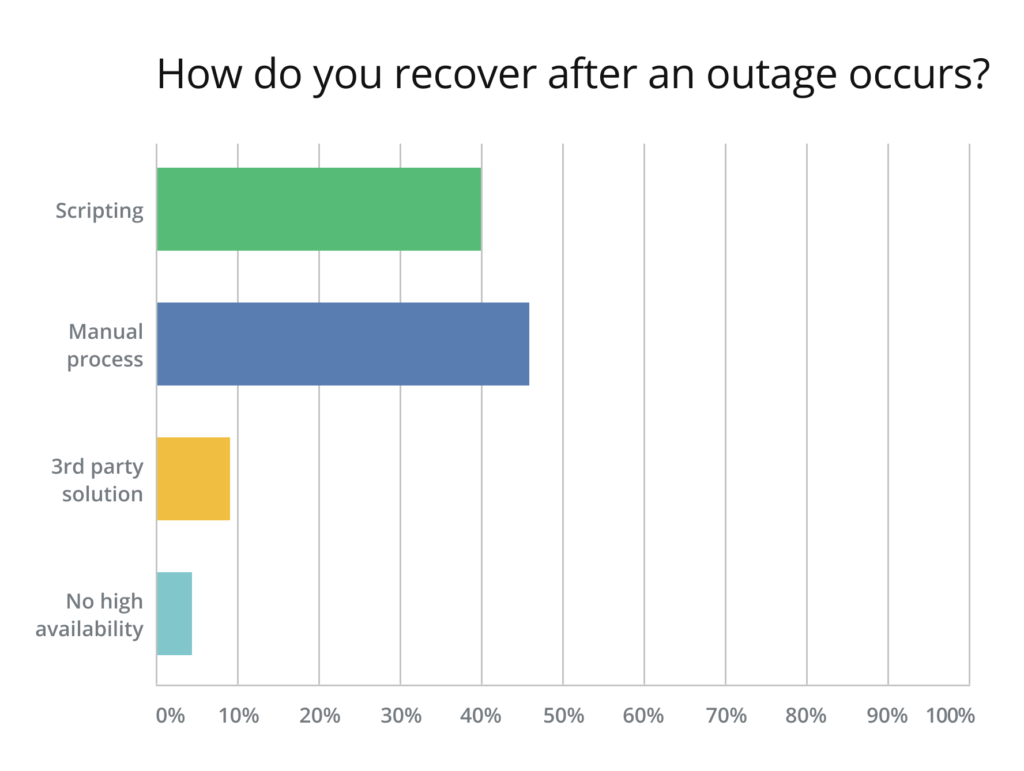

The following question we posed asking to describe the recovery process for an application showed over 40% saying they use scripting while almost 46% said they were recovering their applications manually. Only 9% were using a 3rd party solution to automate their backup & recovery process and 4% had no high availability process at all.

Why is this so shocking? As Amazon Web Services (AWS) continues to dominate the public cloud market, it seems automation of a key stage in data lifecycle management (backup and recovery) still isn’t in place for most enterprises. This means that many enterprises are relying on one person on their dev or backup team who knows every line of code. But what happens if he’s out sick, on holiday, or leaves the company entirely? What happens if a rewrite, change or urgent recovery is needed and your team of developers don’t have access to this knowledge? “Scripting is painful” as one survey respondent put it succinctly and simply not sustainable.

Automate, automate, automate

These survey results are even more alarming than they seem because AWS users are possibly not even aware that automated backup of their data on the cloud is essential. Using a manual process for recovery doesn’t provide the high availablity needed when the inevitable downtime occurs — because things can fail, and will fail given enough time (and in the words of Werner Vogels, VP and CTO of Amazon: “Everything fails all the time”). AWS users must understand that accidental deletion and other human errors, bugs, malicious attacks, and AWS outages (rare, but it happens) can result in detrimental data and/or financial loss as recovery in these cases are not straightforward, instant nor anticipated.

The number of AWS accounts per client is going up

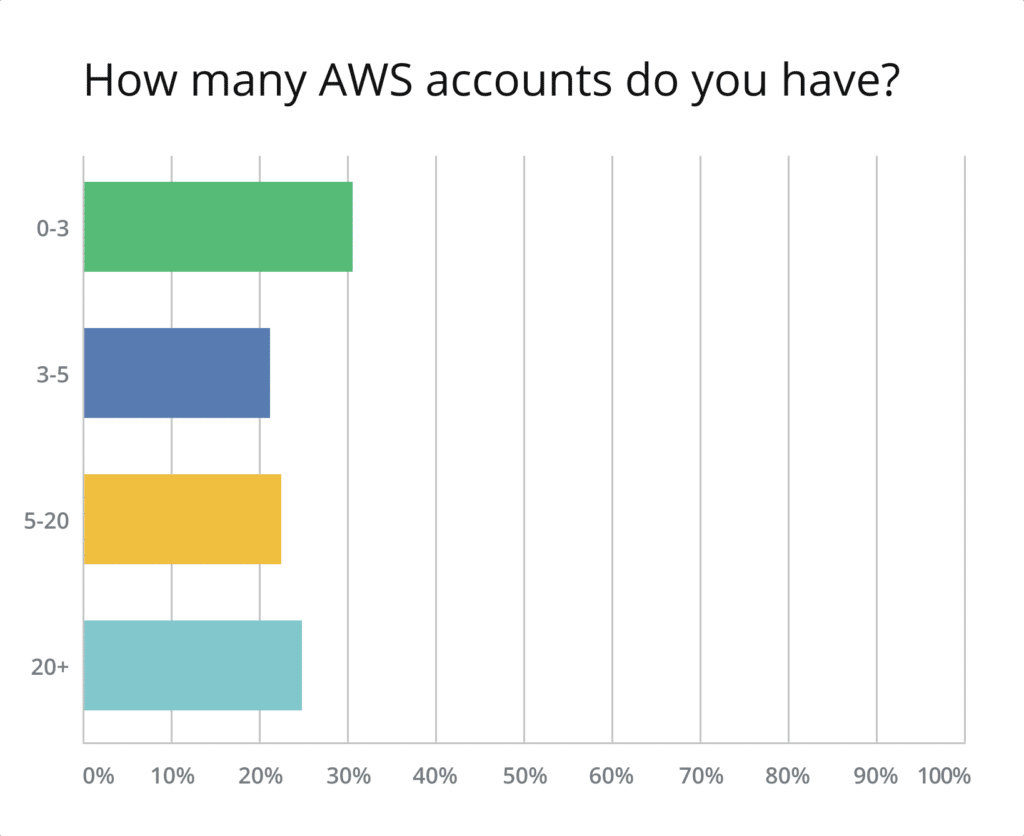

70% of those surveyed have more than three AWS accounts, signaling a clear movement from using only one AWS account to relying on many. While the environment segmentation within a single AWS account is often sufficient, having additional accounts provides better segregation and a higher level of security. And, though it is true that the complexity of an infrastructure as well as the overhead required to maintain it grows with the number of accounts, AWS provides services like Organizations, Transit Gateway, and Resource Access Manager to help you manage this complexity.

Additionally, around 22% of respondents stated that they are running between 5 and 20 AWS accounts, and 25% said that they work with over 20 AWS accounts. These numbers point out that the majority of AWS clients run fairly large cloud environments. It seems that even startups are not limiting themselves to a single account anymore.

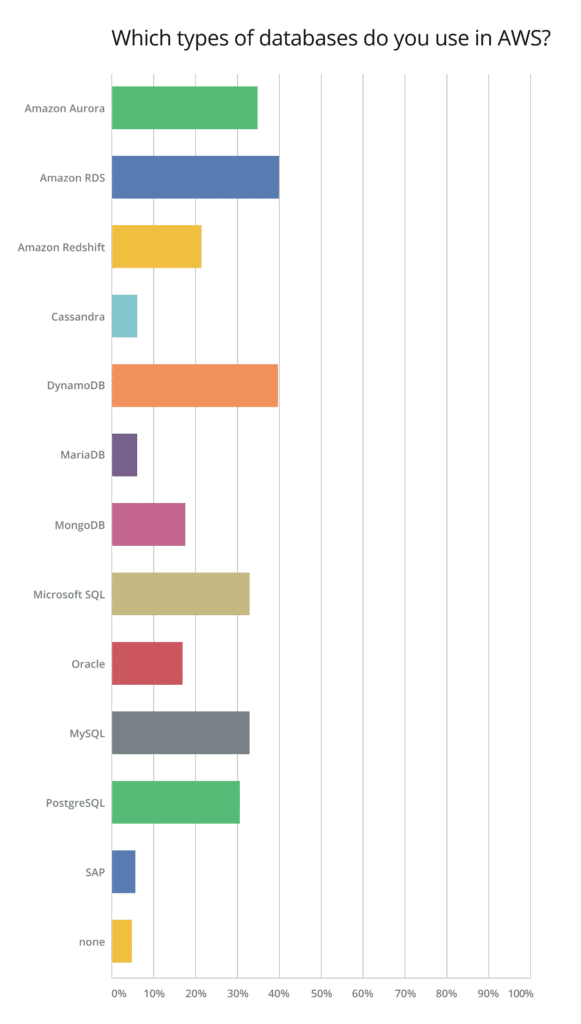

Managed databases still dominate the AWS Cloud

It is clear that AWS RDS is still the dominating database service on AWS, although Amazon Aurora has been gaining traction. It appears that offloading database management tasks, such as capacity planning and hardware provisioning, has received the attention Amazon has been seeking. The introduction of Serverless Aurora has surely helped as well. DynamoDB continues to be one of the most heavily used AWS services overall.

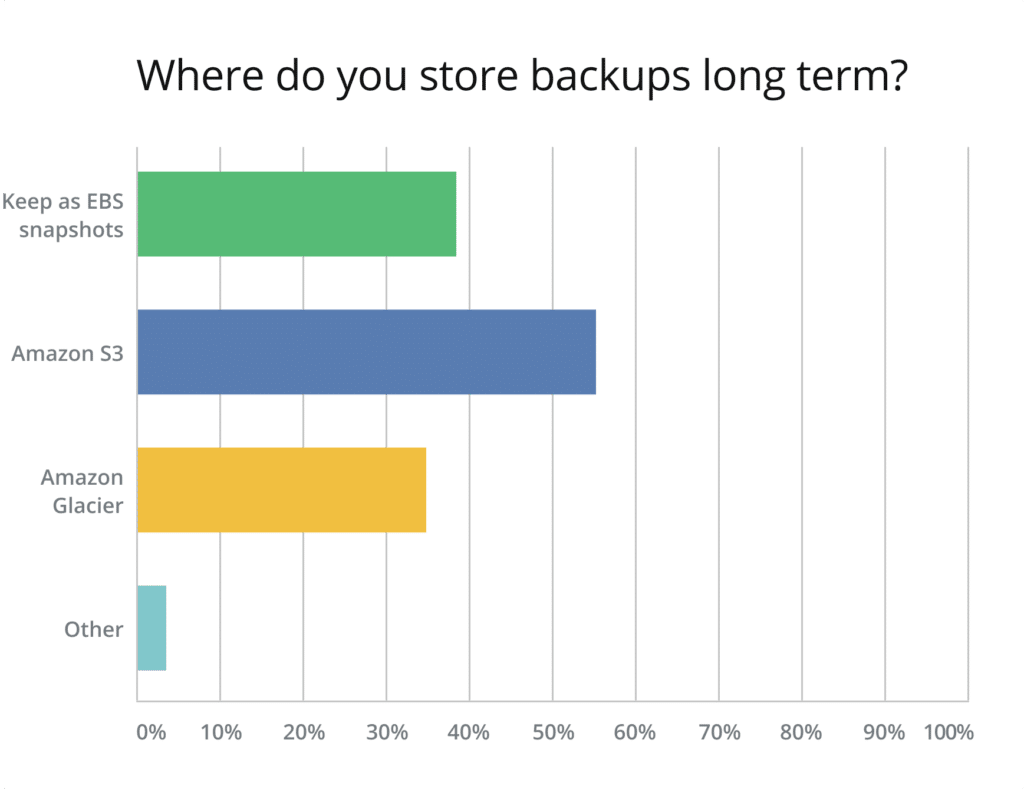

Long-term data storage is still mostly handled by S3

The majority of survey respondents, when asked about their long-term backup storage, answered that they still rely on S3. This number is about 30% more than the number of respondents using AWS Glacier, which is the proper service for storing cold data (including backups). The cost of S3 is $0.023 per GB stored, while Glacier offers incredibly cheap storage at $0.004 per GB per month (not to mention the Glacier Deep Archive tier, which costs only $0.00099 per GB).

Last year, we predicted that cold storage options would be used at a much faster pace. It seems that many companies are transitioning slowly and continuing to use options that are not the most cost-efficient ones available.

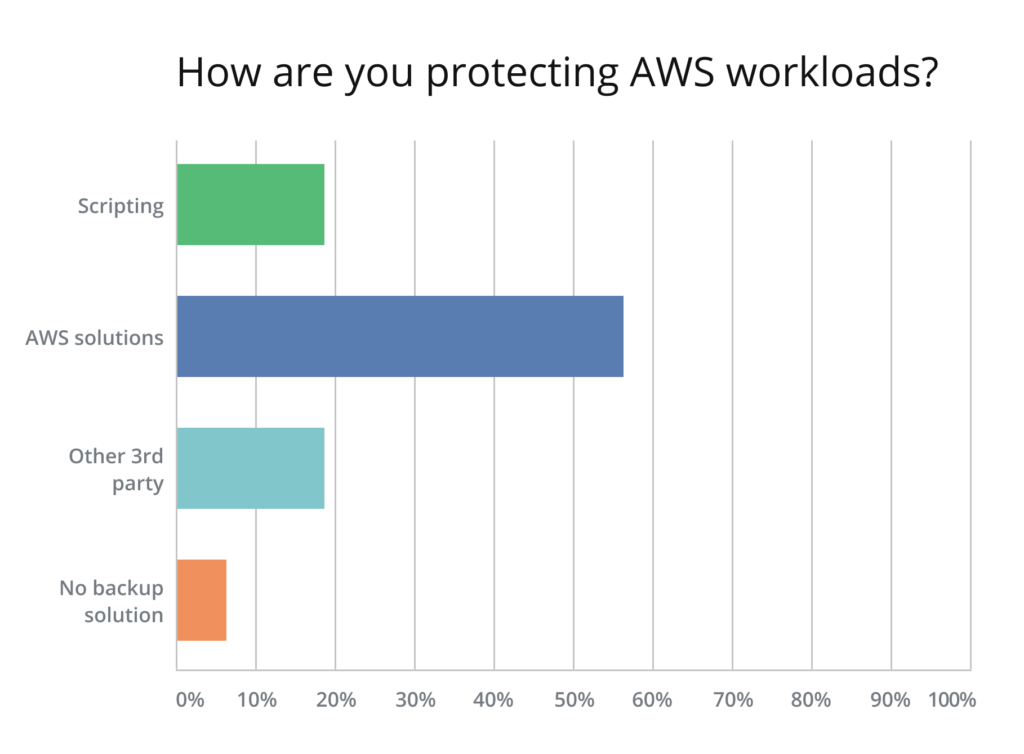

AWS native solutions are the primary tools for protecting cloud workloads

The almost 60% of survey respondents who say that they use AWS native solutions like AWS Backup or Data Lifecycle Manager to protect their workloads in the cloud represent a huge increase over the only 9% who stated that they use cloud backup solutions in last year’s AWS Cloud Data Protection Report. Also, the use of homegrown scripts has gone down from 29% to 19%. Perhaps most importantly, only around 7% answered that they have no backup solution in place, compared to last year’s staggering 32%. Even though this is a huge step in the right direction, this number should be approaching zero.

These numbers seem to be more promising, however, given that data is scaling, and that most AWS users have more accounts, instances and databases to manage, AWS native solutions may not be the clear winner when it comes to automation, elaborate scheduling, automatic colder tier storage, reporting, auditing (via logs) and minimizing risk by performing recovery orchestrations. Third party native-to-AWS tools like N2WS Backup & Recovery provide these crucial advantages in order to avoid any holes in the backup and recovery process. It is clear based on the overall picture that many companies are still running a very risky environment. Hopefully, they won’t become the examples that are used as warnings for others.

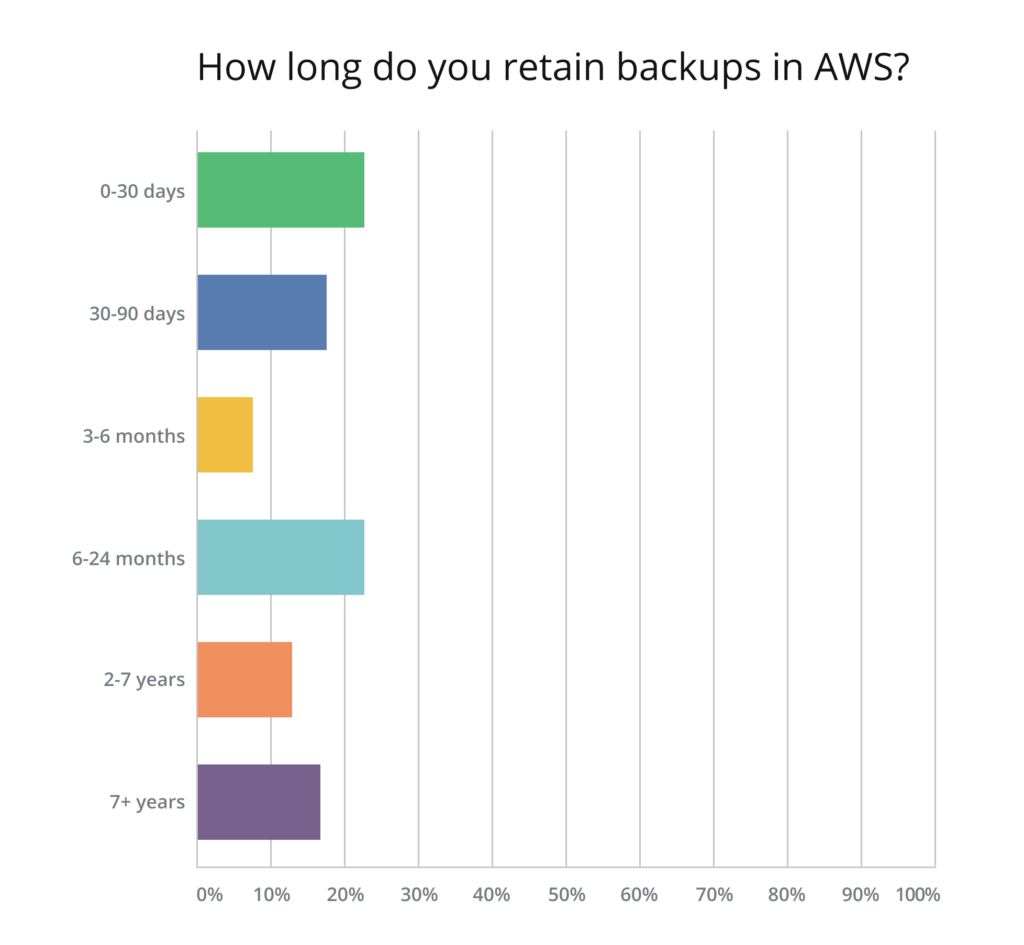

Longer Data Retention Periods are still a significant share of the pie

Compared to last year’s AWS Cloud Data Protection Report, data retention periods seem to have leveled. The amount of respondents keeping backups for up to 30 days, as well as those retaining backup between 30 and 90 days, has dropped slightly. But, overall, it appears that most companies store their backups for either a very short period of time (up to 30 days) or between 6 and 24 months. Over 10% stated they keep backups for between 2 and 7 years, and less than 20% have a very long-term retention period of 7+ years which is a significant share of the pie, especially given that compliance demands within virtually every industry are becoming more stringent.

Due to the high cost of storing data outside of cold storage and the still very high rate of S3 (as opposed to Glacier) use for long-term data retention, these numbers are somewhat understandable. With all the compliance requirements being imposed on organizations these days, we should expect to see higher rates of Glacier use as well as longer backup and data retention periods in the near future.

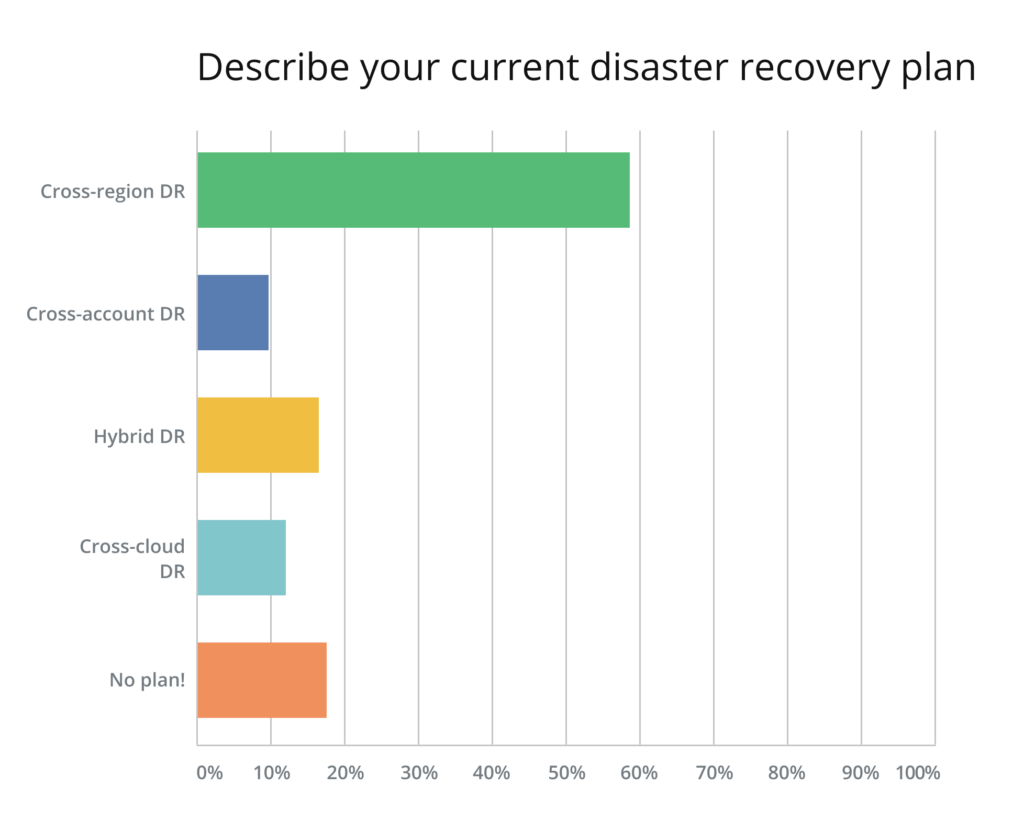

Companies Continue to Rely Heavily on Cross-Region DR but still not Cross-Account

The cloud can be a very dangerous place for a business if a system is not setup and used properly. In last year’s AWS Cloud Data Protection Report, we criticized the Disaster Recovery (DR) plan of most of our survey respondents, who relied on a cross-region DR only. Having a cross-account plan in place ensures that a company is protected from a single account breach (don’t become the next Code Spaces)—a much more likely scenario than a complete breach on multiple accounts.

The number of those who still rely solely on cross-region DR is as high as last year, illustrating that we have made almost no progress whatsoever in this area. Thankfully, the percentage of users who have no DR plan at all has gone down from 26% to below 20% This is still a significant number, however. Just like the number of those without a backup solution, this number should be much closer to zero.

The use of cross-cloud DR has gone up a bit, from 7% to around 12%. This is a good sign, since cross-cloud DR is also a very good way to secure your cloud data. Each public cloud gives you a separate user account, allowing you to be protected against single-account breaches.

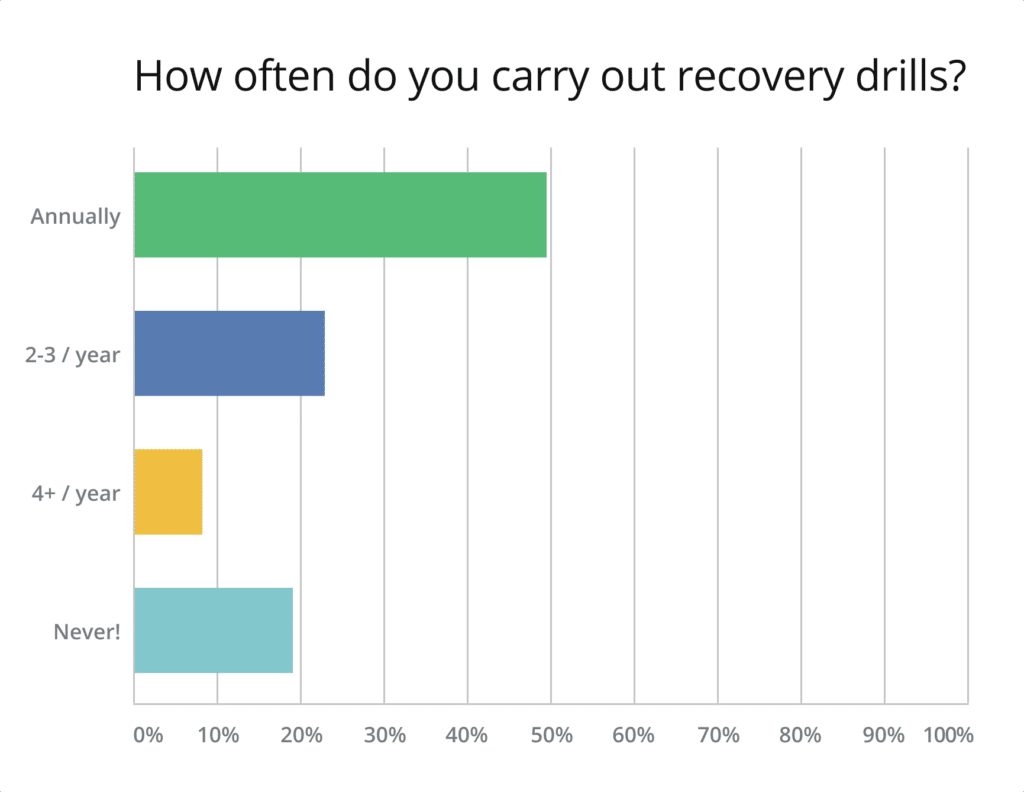

DR Recovery Drills Are Being Conducted More Often

Having a DR solution as well as a properly thought-out plan in place is only one part of the picture. It is also important for a business running in the cloud to ensure that their DR solution is reliable, making recovery drills a necessity.

Last year, almost 40% of those surveyed answered that they never conduct recovery drills. Thankfully, this number has gone down drastically this year, to under 20%. The number of those who only do the drills annually is still very high, but many more respondents state that they organize DR drills multiple times per year. Because the next outage is always looming just around the corner, we hope that more companies will take this process seriously.

AWS Cloud Data Protection Report Summary

This year’s results show that, while there is an overall improvement in dealing with security of the cloud, many companies lag behind when it comes to properly protecting their environments. It is evident that organizations still have reservations and lack of knowledge about security, data lifecycle management, regulation demands and storage costs in the cloud.

Ensuring business continuity is of the utmost importance, and more needs to be done. Some may argue that increasing costs make it challenging to apply security measures; however, some steps, like creating a backup plan and running additional DR drills, incur no additional costs. This is very troubling and we do hope that more companies will take security of the cloud seriously, and that next year we will see a drastic improvement in some of these numbers.

Luckily, awareness does seem to be growing to a certain extent and as tools like N2WS Backup & Recovery remove the need for manual recovery and scripting and ensure HIGH AVAILABILITY for applications, data and servers (EC2 instances) running on AWS. N2WS supports backup, recovery and DR for MANY AWS services, including: Amazon EC2, Amazon RDS (any flavor), Amazon Aurora, Amazon RedShift, and Amazon DynamoDB and more.

Try the leading solution on the AWS Marketplace for protecting AWS environments free for 30-days today.