As companies today migrate more and more into cloud-based production environments, any downtime can cause substantial damage and have a significant impact on both their bottom line and customer base. And while enterprises are increasingly reliant on the ability of cloud service providers to guarantee their uptime and maintain service, outages still happen. The cause can be due to any number of reasons, including cloud vendor failures, database errors or insufficient failover mechanisms. While your cloud provider might very well be the culprit for service outages, there’s another elephant in the room that’s often a major cause of outages today: faulty code. This includes logical pitfalls in your code, service and code integrations or simply human errors in uploading bad code. Software fails of this nature are rampant, and in this article, we’ll talk about how these failures come about, and discuss ways to prevent them.

The Cost of Logical Failures

Changing your code can break a service. For example, Asana recently had a major outage due to a late code deployment; Google’s Compute Engine cloud went down due to a network update that hit a bug, followed by a failsafe software failure; and My Pingdom suffered downtime due primarily to man-made errors. Some ways to prevent these errors include updating your code using a stepwise deployment that’s pushed to users gradually – rather than a ‘big-bang’ deployment; and if necessary, utilizing the practice of ‘rolling back’ and reverting back to a state where you were before an update was made.

The Upside of Continuous Delivery

In DevOps accelerated delivery, code is updated all the time. So if the test coverage (including integration and load tests) it not good enough, it can harm a site – such as was the case recently with AirBnB. To avoid this, you need to create or implement a solution that gives you complete failover and rollback capability. Continuous delivery upside comes from confidence in the release process (since it’s done so often), and from the assurances that you’ll be able to find the problem reasonably fast (since you only have to look through a smaller number of changes).

The result may mean that you’ll have fewer bigger problems and more smaller problems. There will be cases, for example, where 10 smaller downtime periods of five minutes each is worse than one longer downtime period of one hour, but usually it’s better to have the former. Lexus did not fare so well during a recent outage caused by a glitch due to an overnight software update, and the result was a significant outage that wiped out a large number of Lexus infotainment and navigation systems in customer vehicles.

Final Note

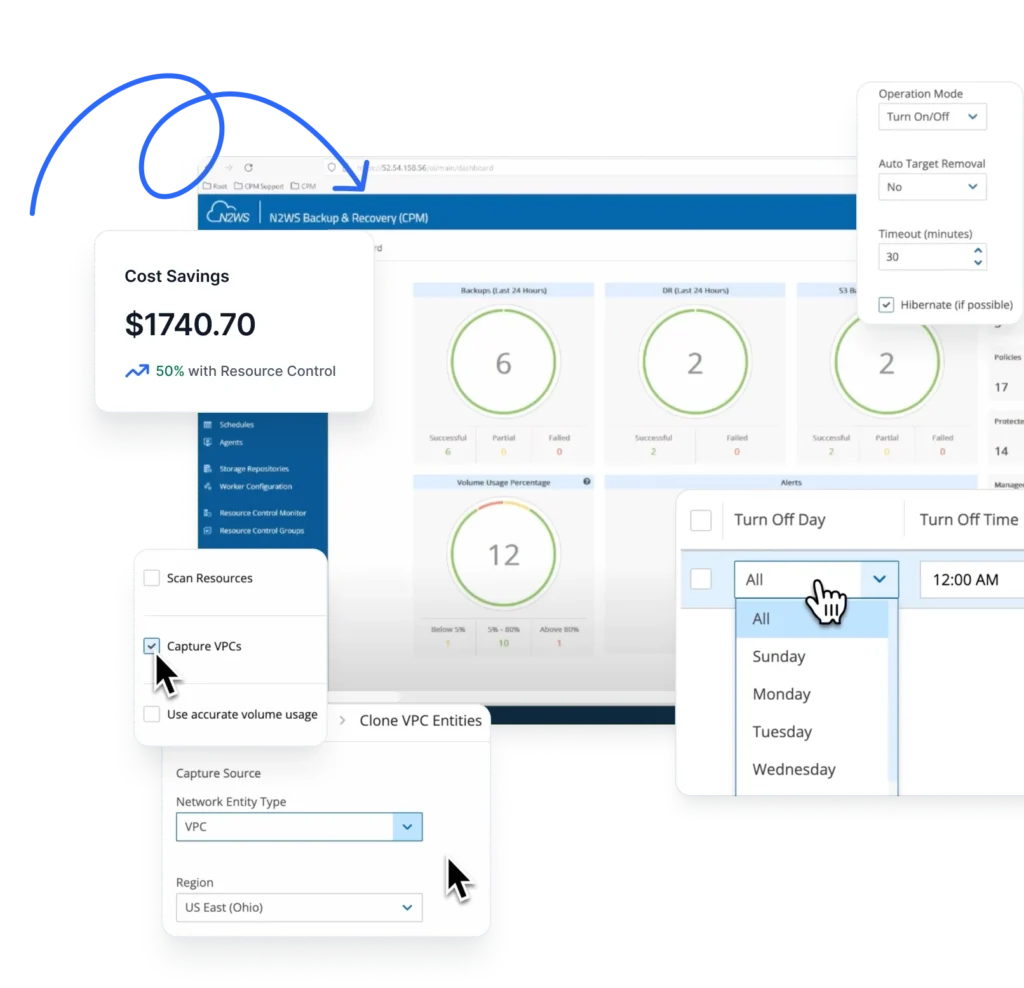

Enterprises utilizing AWS EBS for their cloud services can aim to avoid these outages by taking advantage of AWS’ vast backup and disaster recovery capabilities, such as spreading their deployment applications across multiple availability zones and regions with the idea that downtime won’t occur. When it comes to Amazon, it’s not just about having the native snapshot mechanisms of Amazon (EC2), but also about having a robust and scalable process around the snapshot capability, to make sure that in such cases you can rollback to a point in time version that is bug-free – and have the time to know what happens without harming your users. Solutions like N2WS for the AWS cloud can help resolve logical and code-related outages such as the ones discussed above, and give you the ability to easily go back to a desired point in time where the code is clean. N2WS provides flexible backup policies and scheduling, rapid recovery of instances, and a simple, intuitive and user-friendly web interface to easily manage backup operations.