An automated deployment environment. An agile methodology that merges the roles of development and operations. A set of tools and technologies that streamline the development lifecycle from planning through to deployment. However you define it, DevOps is making waves in the IT industry.

DevOps is also one of the key selling features of the cloud. And it’s already bringing huge benefits to enterprise cloud users, through shorter development cycles, accelerated release times, improved error detection, fewer deployment failures and faster recovery times. Market leader AWS, in particular, boasts an exceptionally large range of integration, monitoring and automation tools to choose from.

But what if you’re new to the cloud? How do you make a start when you’re spoilt with so much choice? And what are the essential AWS automation tools you really have to be familiar with? So, in this post, we’ve picked out five of the most powerful and widely used tools you should consider when embarking on your DevOps journey.

1. Chef

Chef is an infrastructure management tool that allows you to configure, deploy and manage applications and servers using predefined, automated procedures. It can manage anything that’s capable of running the chef-client software and can be used for physical, virtual, on-premise, cloud and hybrid environments. Each procedure is known as a recipe and holds a blueprint for building or updating your infrastructure. Rather than manually interacting with each machine individually, the Chef server does the work for you—by rolling out patches, updates, configuration changes and provisioning instructions automatically, based on the contents of the recipe. Chef’s biggest strength lies in its ability to model IT infrastructure at scale from a centralized hub of information. This makes it particularly useful for maintaining visibility and control over large and complex enterprise IT environments.

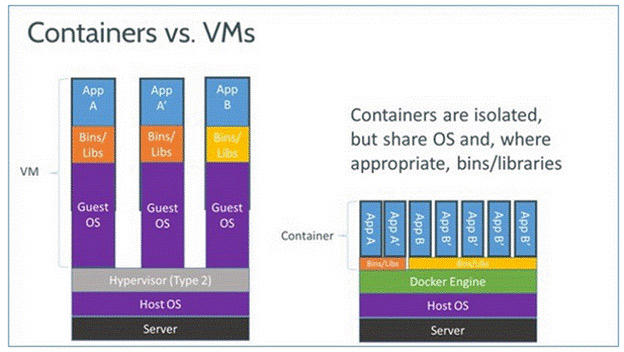

2. Docker

Docker is a container technology that’s transforming the way enterprises package, deploy and manage applications. Unlike hypervisors, which abstract system hardware into separate guest operating systems, containers share the same OS. This means you can deploy as many containerized applications to a single cloud instance as capacity will allow–so you essentially get more utilization from the same resource. From a DevOps perspective, containers are a more efficient way to move applications between test and production environments. This is because they’re lightweight, portable and self-sufficient tools that maintain all application configuration settings and dependencies—which means no more manual installation and fewer troubleshooting issues. Docker has emerged as the frontrunner in container technology through its ease of use and by leading the way towards container standardization.

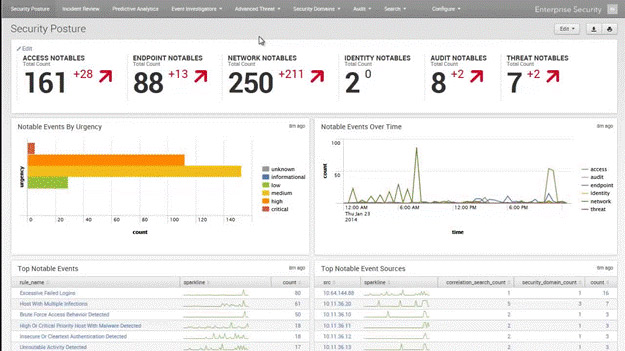

3. Splunk

Splunk is an operational intelligence tool that aggregates and analyzes machine data. You can use it to harvest information from the log files of virtually any source, whether in a physical, virtual or cloud environment. Its core feature is a powerful data search engine, accessible through a web-style interface. The best way to understand the value of Splunk is to look at typical uses for the information it collects. You can optimize cloud environments by tracking real-time data for CPU, memory and disk consumption and triggering alerts when resources are over- or underutilized. You can monitor events that signify potential security breaches. Or you can gather data from an ecommerce website to analyze customer behavior.

Splunk SIEM Dashboard (image source)

4. GitHub

GitHub is best known for its public-facing repository for publishing and sharing code. But it’s also a powerful version control system, with private repositories and a range of collaboration features and task management tools. It offers enterprises significant DevOps benefit by allowing any number of team members to work on the same code base —without worrying about duplicate versions and having to send files back and forth. One of GitHub’s most useful features is the ability to roll back to a previous code commit if something goes wrong. Another notable feature is branching. This allows you to safely develop new functionality in a separate workflow without affecting the master code base. You only then need commit the code back to the default branch once you’ve successfully completed and tested it.

5. Jenkins

Jenkins is a cross-platform continuous integration (CI) and continuous delivery (CD) engine for automating and streamlining the software development process. You can hook it up to a whole variety of other DevOps tools, such as Chef, GitHub and Docker, to orchestrate the entire CI/CD pipeline. It can run automated testing tools, merge code from different sources into a single build file, validate communication between different application components and deploy code to production environments. It can also monitor feedback, quickly identify run-time errors and rollback to previous application builds. By integrating development, testing and deployment technologies into a single workflow, Jenkins frees up more of the developer’s time to focus on coding, manual testing and other value-added activities.

How to Choose the Right DevOps Tools

The reason DevOps is so hard to define is that it encompasses such a wide range of lean and agile approaches. By the same token, no single tool can accomplish all your DevOps processes and objectives. The specific tools you’ll choose will very much depend on your own enterprise computing environment and the most pressing needs of your IT teams. For example, if you need to manage complex infrastructure in a large-scale multi-cloud environment then your first priority will likely be towards tools that manage infrastructure as a code, such as Chef or Puppet.

Your exact choice will then boil down to subtle differences in the way they work, or even simple personal preferences. For example, Puppet is designed to be easy to learn and use, whereas Chef is a more complex, mature and feature-rich platform. Some will initially seek out tools to aid continuous integration (CI) and continuous deployment (CD), while others may prefer to start by improving feedback and collaboration.

Other factors to consider are whether you run Windows or Linux in your on-premise and cloud environments. You may also want to avoid tools that use a command line interface and instead run everything from an easy-to use dashboard. And a tool that supports an extensive ecosystem of third-party services may have the winning edge over its rivals. Whatever tools your enterprise decides to use, it’s important you start small. Pick an easy project and an IT team that’s willing and able.

Once you’ve successfully implemented DevOps tools into your first project, you’ll soon be able to demonstrate their business value. And that’ll be the green light your enterprise needs to approve further plans for DevOps adoption.